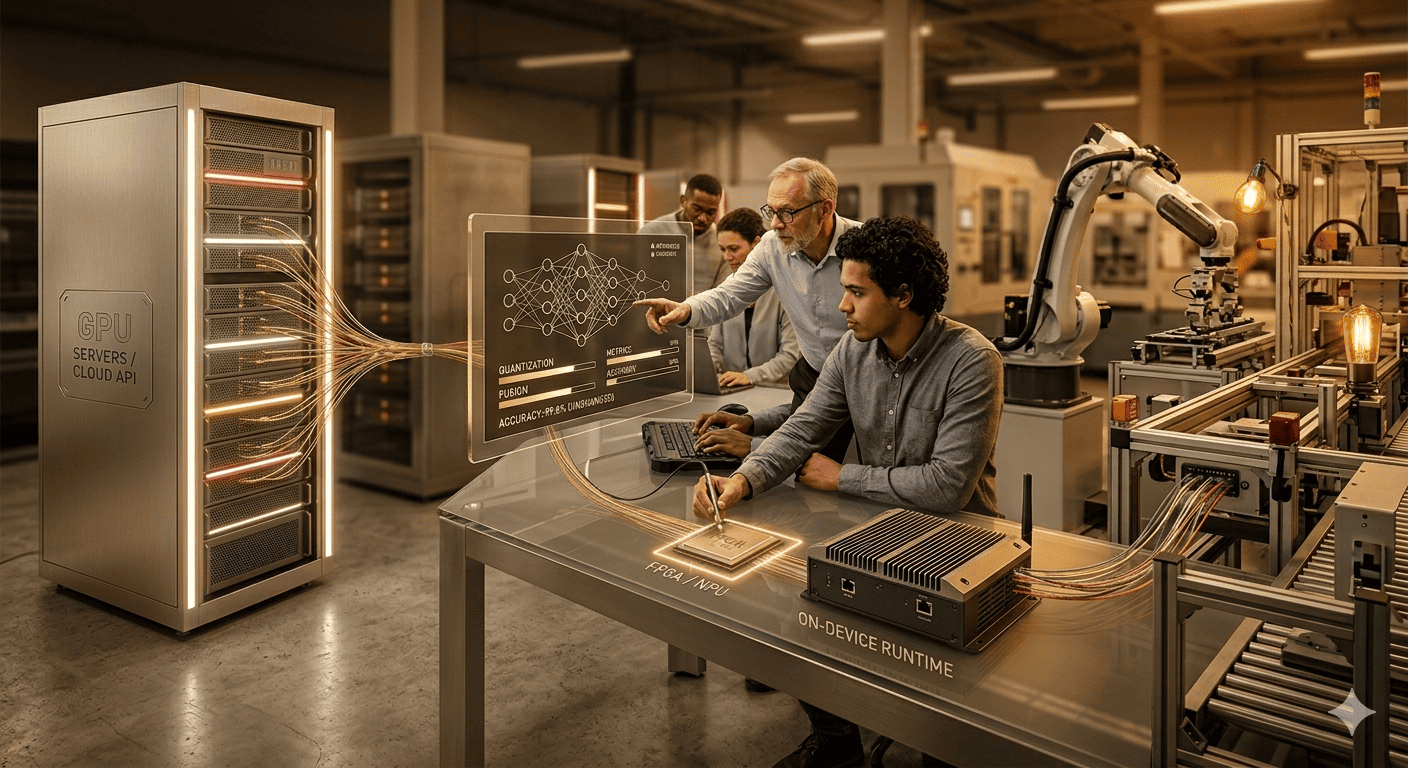

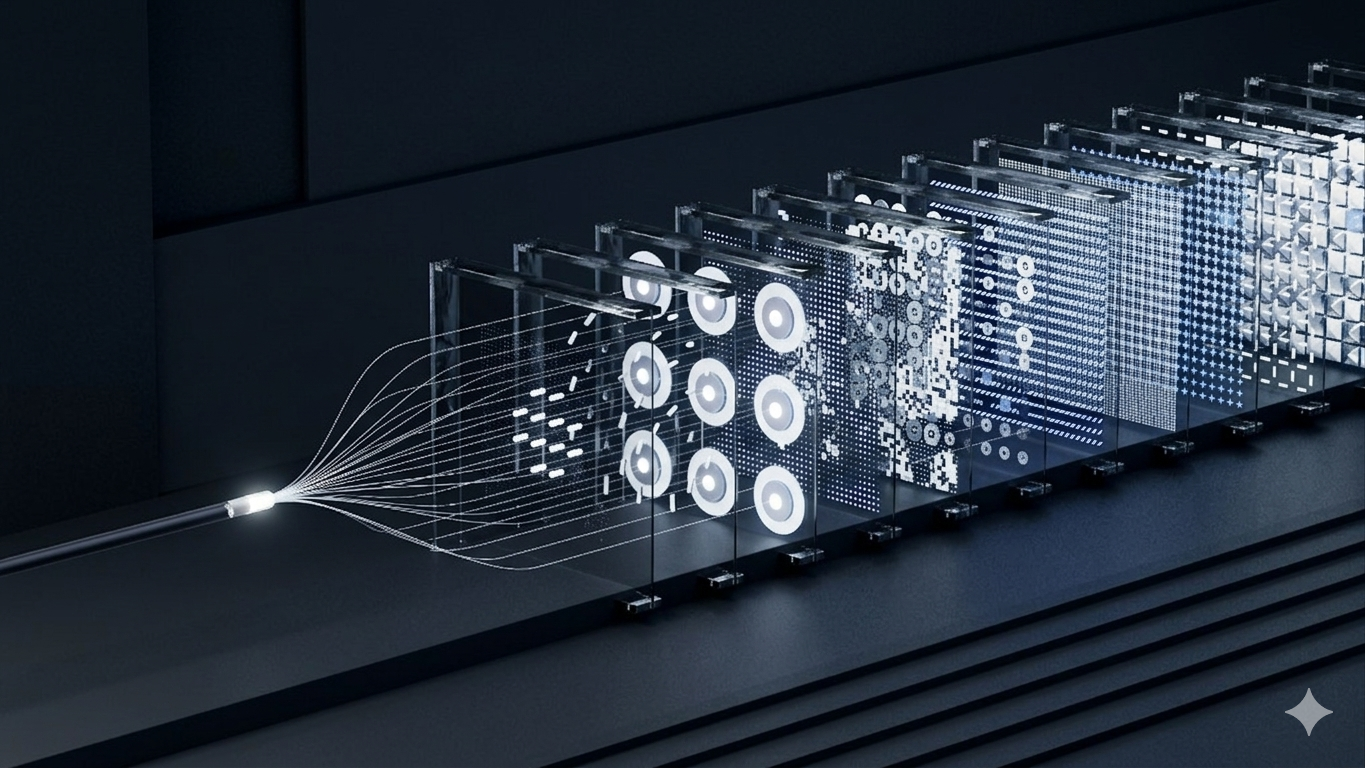

The Falcon Sorting Platform is YantraVision's FPGA-powered optical sorting system that delivers hard real-time bulk sorting decisions in under 2 milliseconds. Built on a three-layer architecture — custom FPGA hardware, configurable multi-spectral sensing (RGB, SWIR, NIR), and the Clever Sight AI engine — Falcon serves OEM machine builders deploying sorting lines across agriculture, recycling, textiles, and minerals.

Sensing

Sensing Layer

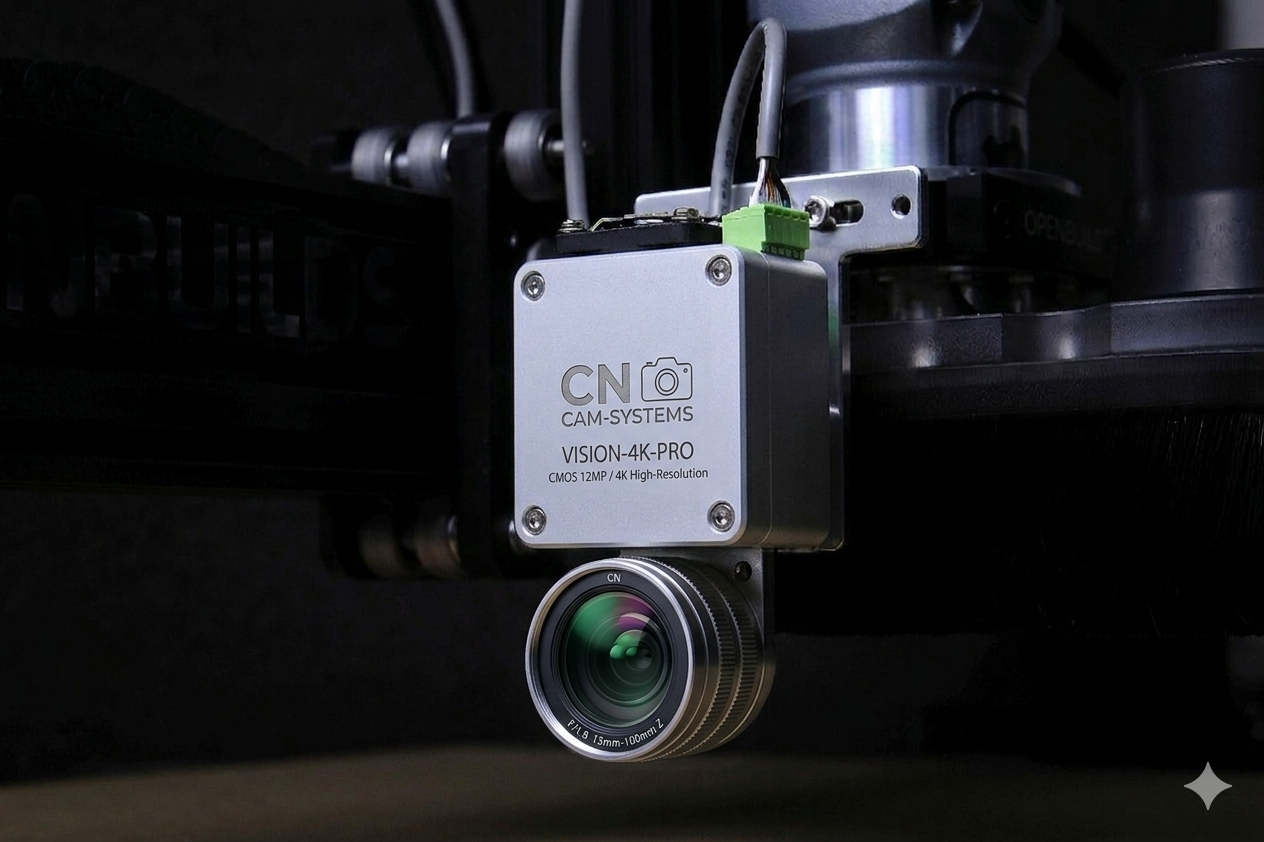

Configurable cameras and illumination — RGB, SWIR, NIR, and combinations.

FPGA Core

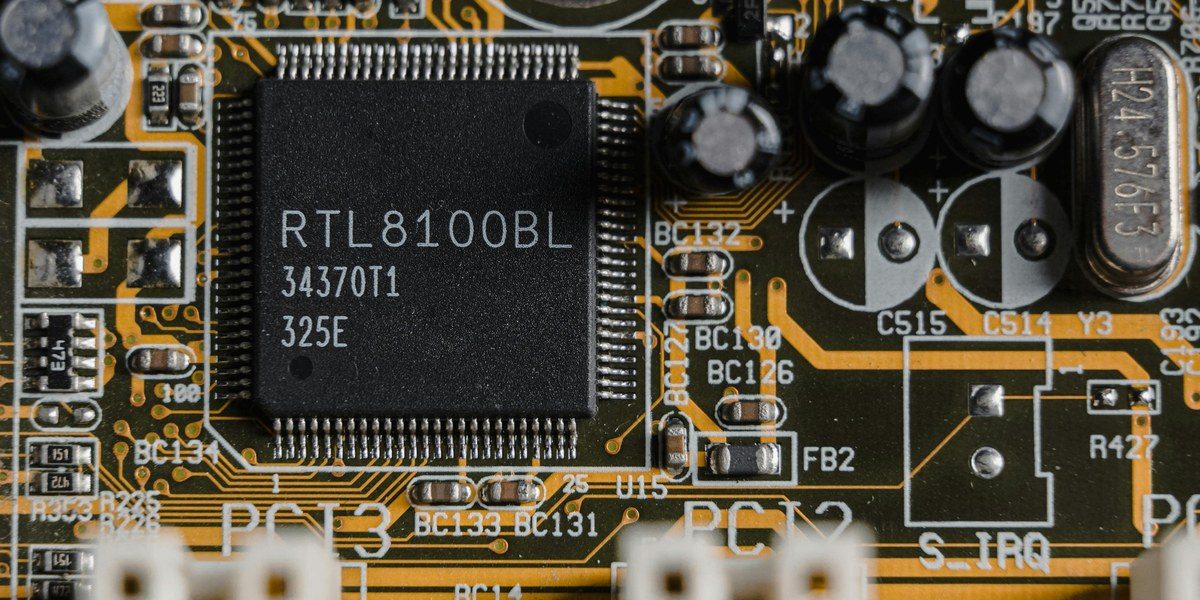

FPGA Core

Hard real-time, <2ms cycle. Custom boards, YantraVision IP.

CSE

Clever Sight Engine

Analyzes data, auto-tunes parameters continuously.

The central claim of Falcon is hard real-time processing. Every pixel from every line scan is processed within a deterministic cycle of under 2 milliseconds — no operating system, no scheduling jitter, no unpredictability.

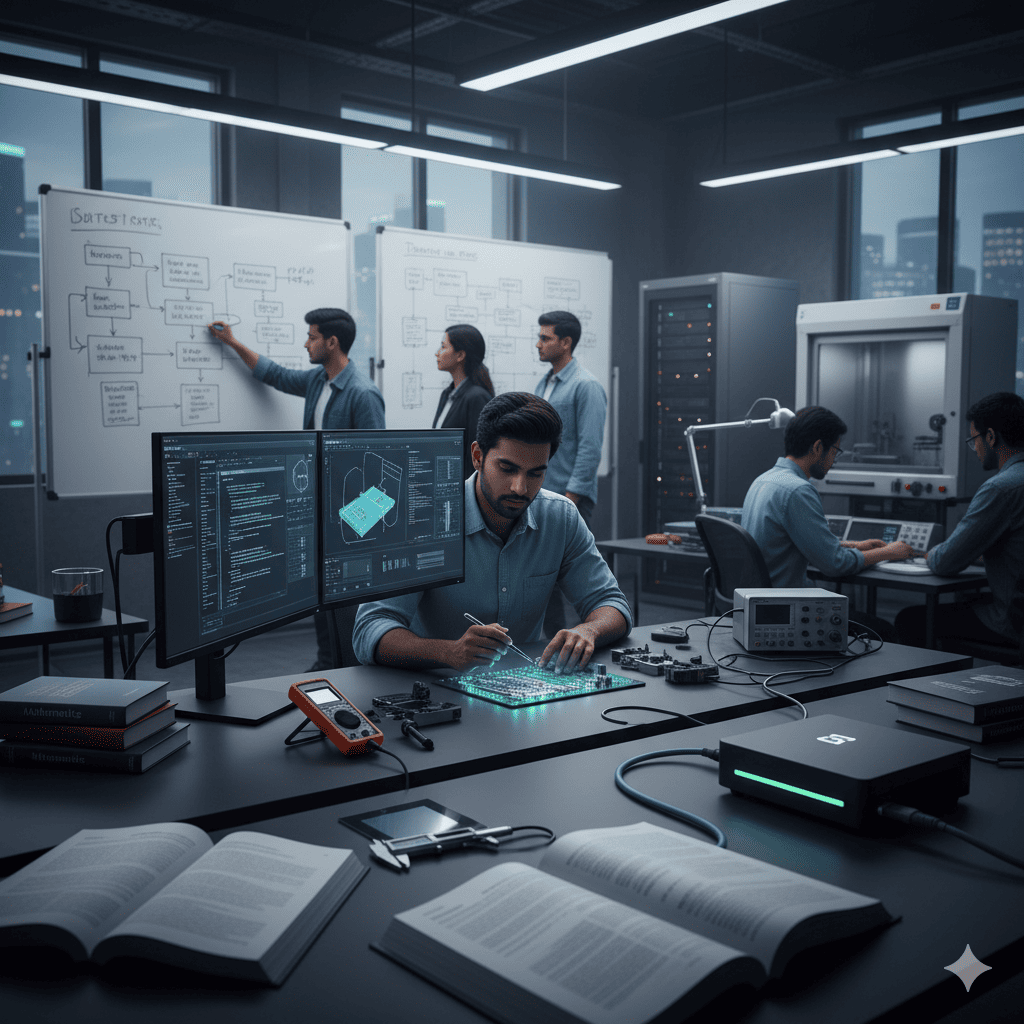

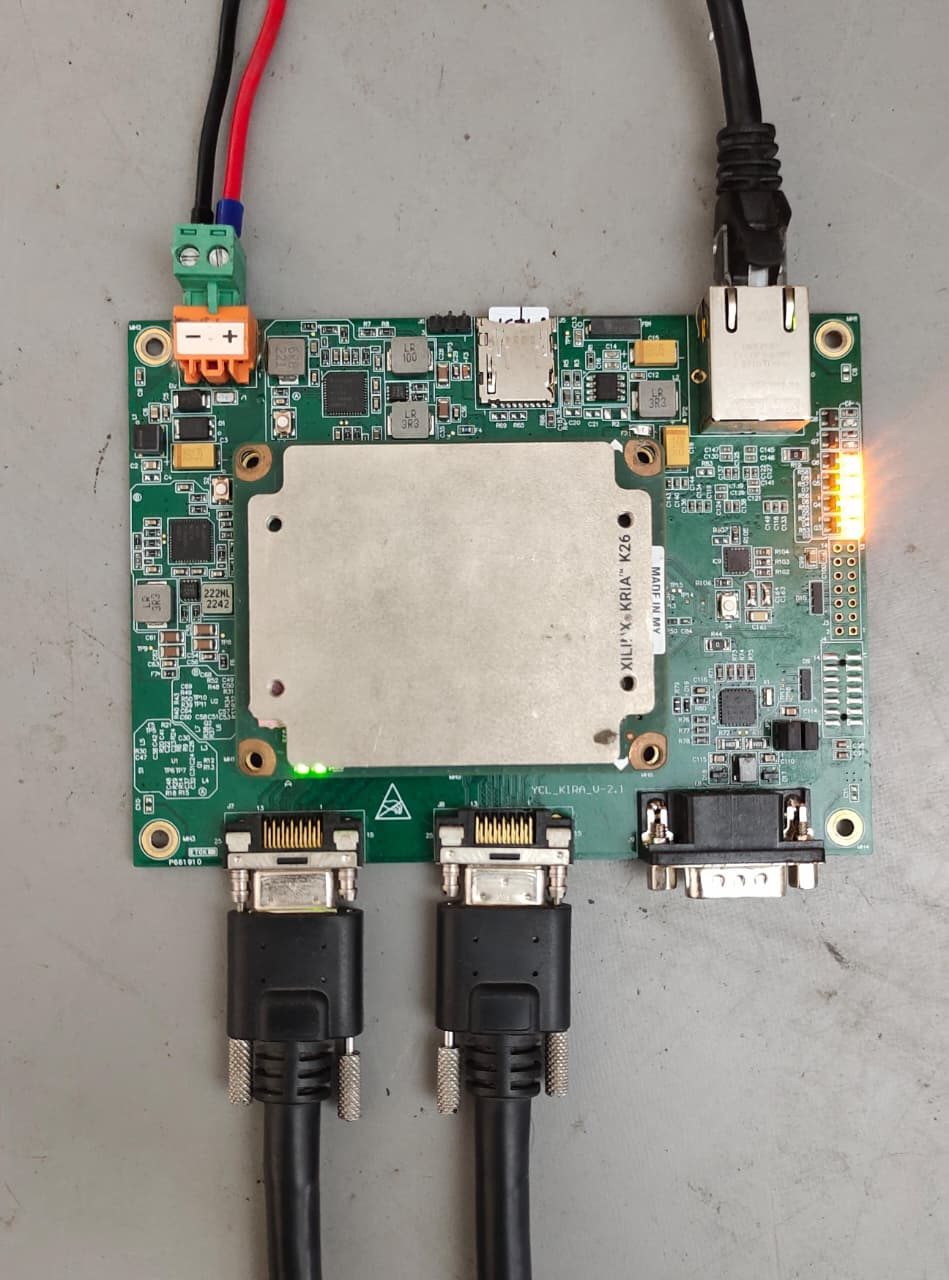

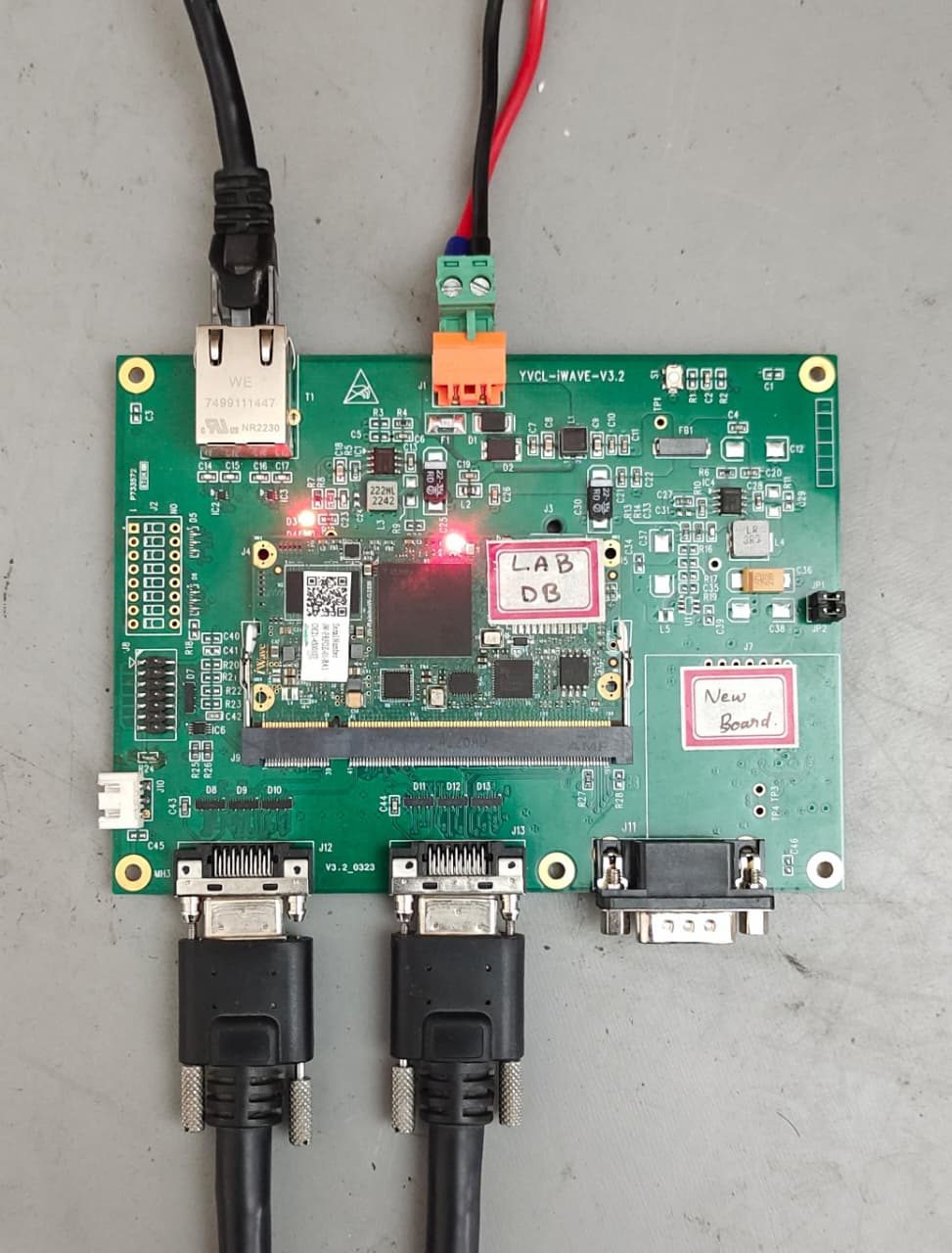

The FPGA boards are custom-designed and manufactured by YantraVision — built specifically for the harsh conditions of industrial sorting lines: vibration, dust, heat, and continuous 24/7 operation. The firmware and processing algorithms running on them are entirely YantraVision IP.

Falcon FPGA processing boards — custom-designed and manufactured by YantraVision

PC-based systems introduce OS jitter — they cannot guarantee the microsecond-level timing that precise ejection valve control demands. DSP boards are the previous generation: purpose-built but throughput-limited, unable to keep pace with modern line speeds. Falcon is what comes next.

| Feature | PC-based | DSP | Falcon FPGA |

|---|---|---|---|

| Latency | Variable / jitter | Limited | <2ms deterministic |

| Throughput | Moderate | Limited | High — line speed |

| Hardware | Off-shelf | Off-shelf | Custom, YantraVision IP |

| Harsh environment ready | ✗ | Partial | ✓ |

| AI feedback loop (CSE) | ✗ | ✗ | ✓ |

| Contaminant capture + audit | ✓ | ✗ | ✓ |

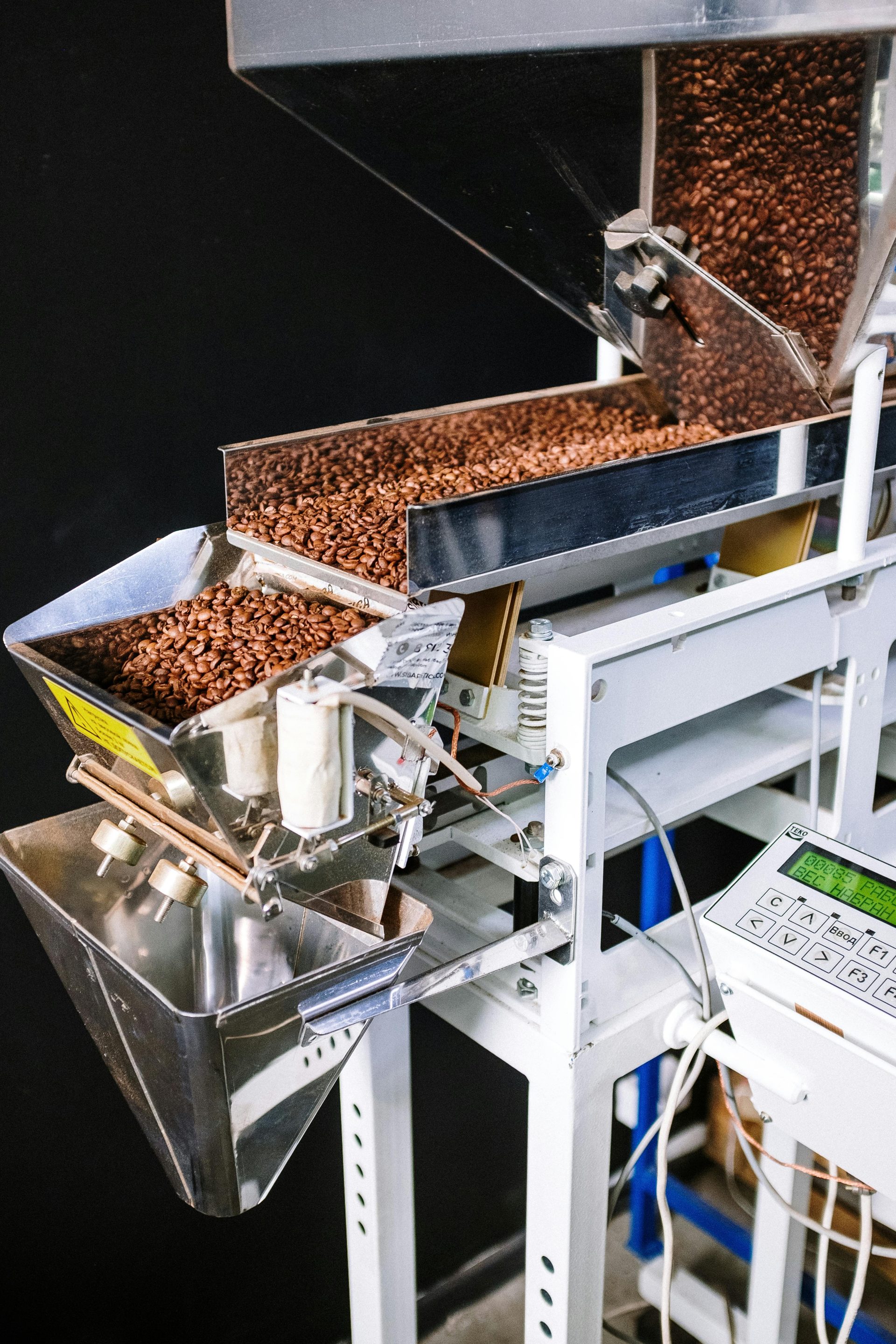

Bulk sorting is not one problem. Sorting rice is different from sorting PET flakes, which is different from sorting copper ore. The contaminants differ, the material speeds differ, the lighting conditions differ.

The Falcon sensing layer is not a fixed menu. YantraVision selects and integrates the right camera and illumination configuration for each application from a vendor partner ecosystem — matched to the FPGA core. All configurations use 2048–4096 pixel line scan sensors running at belt line speed.

The core challenge in bulk sorting is not detection — it is consistency. Contamination type, colour, and size shift hour to hour, not just batch to batch.

Consider a rice sorting line.

One batch: husk. The next hour: stones. Fixed parameters that cleared the first will over-eject on the second — or under-detect and let contamination through.

CSE solves it.

Ejection events are sampled in real-time, analysed against processing parameters, and corrections fed back into the processing pipeline — continuously, not just at setup.

The result: a sorting line that does not degrade on long runs and changes in material batches.

❓What is the Falcon Sorting Platform?▼

❓How does FPGA-based sorting differ from PC-based or GPU systems?▼

❓What industries use the Falcon platform?▼

❓What is the Clever Sight Engine (CSE)?▼

❓What camera configurations does Falcon support?▼

❓Can Falcon be integrated into existing sorting machines?▼

Falcon ships as a complete platform — FPGA compute, imaging, illumination, and ejection actuation — ready for integration into OEM sorting machines. YantraVision provides full integration support: sensor selection, mechanical interface, software integration, and commissioning.